Remember back when Microsoft released Tay, the AI chatbot that would be able to respond to your tweets and chats?

If you don’t remember that it’s likely that you remember how Tay was corrupted by the nefarious individuals on the internet within hours of the bot being available for folks to talk to.

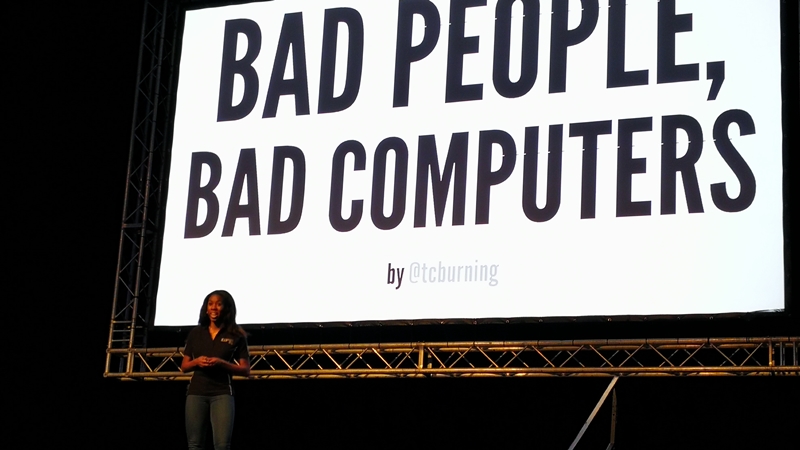

Of course one could blame the internet for behaving like, well the internet, but Twitter product manager Terri Burns points out another factor that could have caused Tay to shift from helpful assistant to bigotry spewing bot. Bias.

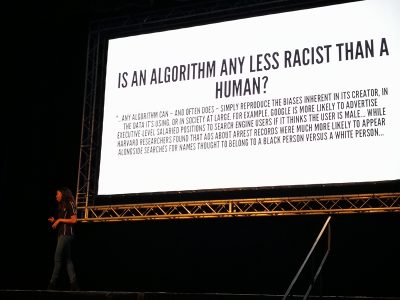

It’s not a malicious sort of bias and it stems more from privilege than anything else says Burns. This bias plays an important role in how software is created today, especially in a tech industry where software increasingly relies on algorithms and machine learning.

In the example of Tay, the development team clearly didn’t account for trolls. Perhaps they didn’t think people were that low or they thought better of humans. We don’t know but it was an oversight that had dire consequences for Microsoft.

Software has flaws because humans do

“We are humans building software for humans, its an incredible responsibility,” Burns said during her keynote address at Devconf in Midrand this morning.

“There are over seven billion people on this planet and there is an infinite combination of 1’s and 0’s. Think about the potential then that technology and software has to touch people’s lives. You can build whatever you want,” Burns put forward.

While the product manager celebrates software and the potential it has she is quick to point out that as humans we are flawed and sometimes – without malicious intent – those flaws seep into the software that is being created.

Tay was meant to be a fun way for users to interact with an artificial intelligence but some users didn’t use Tay for that purpose and developers didn’t account for those sorts of people. Not using software for its intended purpose or not using it correctly is what called and edge case. It’s not the norm for users to try and make a chat bot racist but its something that needs to be accounted for.

While an AI that was turned into a racist bot makes for a good headline, recognising edge cases such as internet trolls can actually help develop software in the long run.

“Being cognisant of your bias makes avoiding problems easier,” explains Burns. Had Microsoft recognised that the internet would do its best to corrupt an innocent AI perhaps we would never have had that chuckle at its expense.

That is your privilege

A lot of the bias we see in software and technology stems from privilege explains Burns. Asking a user to pay R20 to play an online game for instance may exclude a large portion of the market that you may be trying to reach because the assumption from the developer is that everybody has a credit card or access to R20 for the joy of entertainment.

The problem with this is that one cannot expect a developer to account for all seven billion people in the world and every edge case that may pop up.

And that’s where the big “D” comes in. The “D” here representing diversity.

When developing software a team should include as many different from different walks of life. Be that skin colour, gender, backgrounds and even taste in music.

This lessens the likelihood of software being corrupted, broken or left to fizzle out and die due to a lack of user interest when it is released.

This is a simple, yet important step in creating software moving forward as technology such as machine learning, big data, web services and security infrastructure gain greater proliferation. A lot of these technologies require that bias be left out of the equation and that’s near on impossible if you have a cookie-cutter team filled with white men in flannel tops.

We hate to say it simply because it has become cliché, but diversity is terribly important moving forward.

“For the first time in history we re learning what it means to design and engineer products for everyone in the world,” explains Burns.

It’s a seemingly daunting task creating software that could potentially be used by seven billion people, and to get it right diversity and recognition of existing bias is key.

If that all sounds a bit airy fairy and like corporate jargon Burns put forward a quote that might sum up her point much better from the most unlikely of people. Ice Cube.

“You better check yoself before yo wreck yoself.”

Well put Mr. Cube. Who knew hip hop and software development had so much in common?