For the folks that are constantly being asked why they are talking photos of their lunch, you now have an answer.

A team of researchers from the Massachusetts Institute of Technology, Universitat Politecnica de Catalunya and the Qatar Computing Research Institute have published a paper this week titled ‘Learning Cross-modal Embeddings for Cooking Recipes and Food Images’.

For those of us at home the paper introduces RecipeIM, an artificial intelligence which is able to tell a user what ingredients are used in a picture of food it is presented with.

It does this by accessing a corpus of one million recipes and 800 000 images of food.

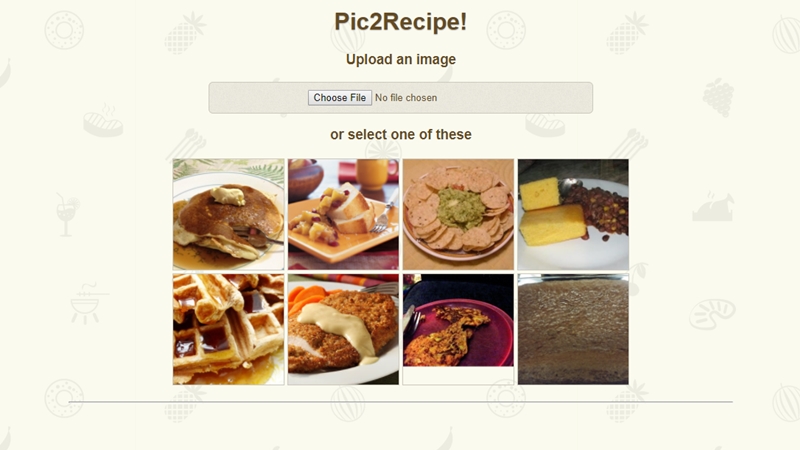

The team has put a proof of concept online for folks to test but we urge you to remember that this is not meant to work properly, at least not yet.

With that having been said Pic2Recipe was able to identify a bacon cheeseburger as well as the sauce it was laced with.

The research team hopes that its AI can help folks make better choices about what they eat. The flip side of the coin is that you could finally get that steakhouse grill flavour at home.

But the application of this AI goes beyond just eating right.

“More generally, the methods presented here could be gainfully applied to other ‘recipes’ like assembly instructions, tutorials, and industrial processes,” the team said in its paper.

So the next time your questioned about why you’re photographing your food just respond by saying you’re working with AI, that should stop any further questions.