In the period between October 2017 and December 2017, YouTube removed around 8.3 million videos from its platform.

This news comes as a part of YouTube’s first ever community guidelines enforcement report. The report, which is freely available to view here, explains that “YouTube relies on a combination of people and technology to flag inappropriate content and enforce YouTube’s community guidelines”.

What’s really intriguing about the report though is the fact that it clearly states that YouTube makes use of machine learning to flag and remove videos. A hefty 6.7 million of the 8.3 million videos removed were first flagged for review by machines and were never even viewed. For content creators, this is clearly a problem.

However, YouTube further explained that while the algorithms can delete some content on their own, such as spam videos, it mostly forwards anything it suspects is in violation of their guidelines to human reviewers. The human reviewers are the ones that are charge of deciding whether to delete the video to age gate the content.

YouTube also stated that they do receive human flagged videos in addition to the algorithm marked videos.

A single video may be flagged multiple times and may be flagged for different reasons. YouTube received human flags on 9.3 million unique videos in Q4 2017.

When flagging a video, human flaggers can select a reason they are reporting the video and leave comments or video timestamps for YouTube’s reviewers.

The firm notes that flags come from its automated flagging system, users and members of the Trusted Flagger program.

“We rely on teams from around the world to review flagged videos and remove content that violates our terms; restrict videos (e.g., age-restrict content that may not be appropriate for all audiences); or leave the content live when it doesn’t violate our guidelines,” YouTube said.

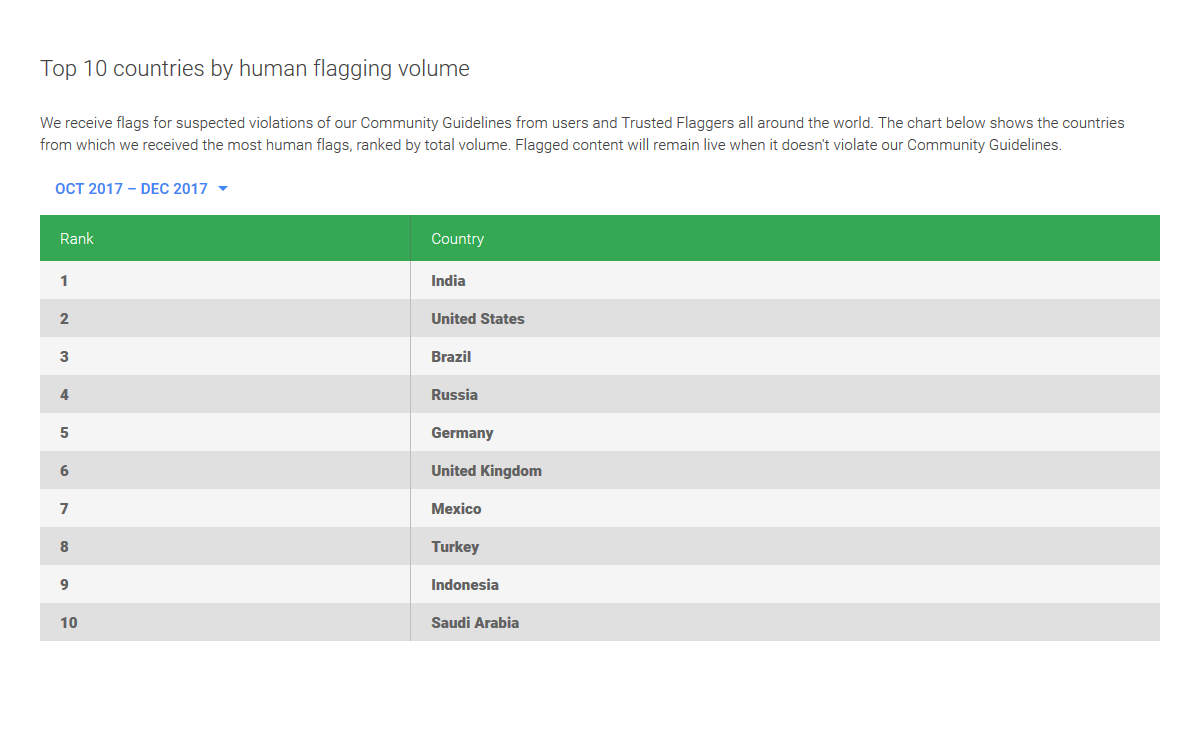

Additionally,the video sharing platform revealed the top 10 countries from which it receives the most human flagged video reports.

[Image Credit – Google]